You Will Never Be A Full Stack Developer

or, Career Advice For The Working Web Dev

I have been thinking a lot about the thing we call "the stack", one of many vague concepts web developers use when describing themselves. People call themselves "frontend", "backend" and "full stack" but there's no real consensus on what any of those mean.

What is the stack?

Part of the problem is that the "stack" is enormous: it includes at a minimum HTML, CSS and JavaScript. But how deep do you go? There are many server-side languages, there's networking to consider, there are application servers and HTTP servers and systems-level concerns. There are build tools, performance optimization, mobile experiences, API surfaces, databases and object stores. You could spend a lifetime enthusiastically trying to learn all of these things and never be done, and I know because that's exactly what I've been doing and I'm not done. So you almost certainly aren't either.

The stack is always evolving

The other part of the problem is that the stack keeps evolving: JavaScript and CSS didn't exist when I learned web development in late 1995, but hand-rolling your own HTTP server was still considered a reasonable thing to do. Writing PHP, which parsed HTTP headers for me, was considered "lazy" and "inefficient" and "not understanding the fundamentals". These are charges still leveled at new devs today, but in the world of JavaScript, where the people advocating for the fundamentals are eight levels up the stack from where I was in 1995.

I have concluded that there are no such thing as the "fundamentals" in an ever-changing stack. Learn the thing that helps you get the job done. To get new things done you will probably need to learn things up and down the stack from where you start, and that's fine. Keep going at your own pace.

The stack, visualized

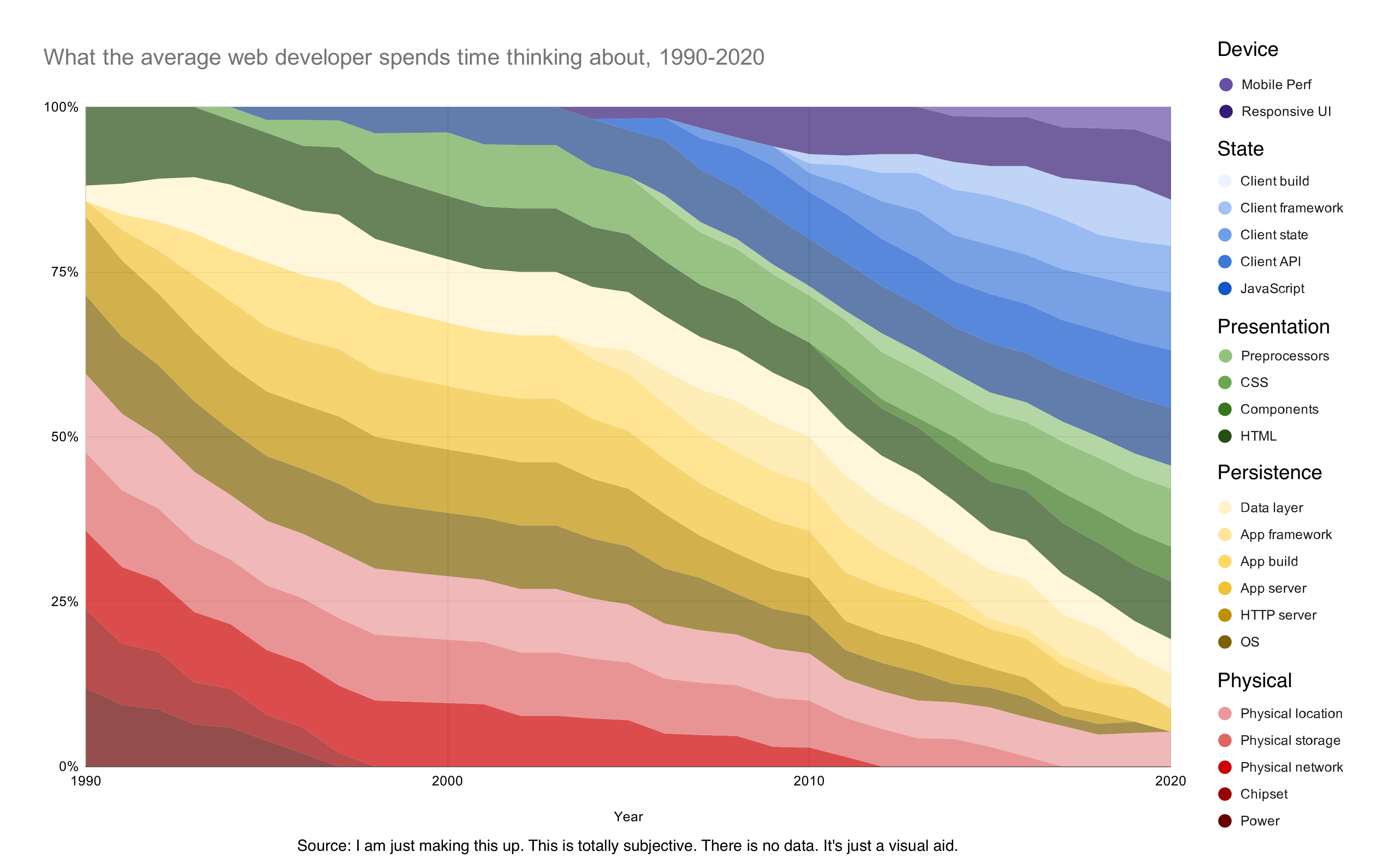

Thinking about the stack in this way made me want to visualize how the stack had changed. I threw a couple drafts out on Twitter and got a lot of good feedback and ended up with this, a 30 year history of web development summarized into a shifting rainbow of the stack (click through to embiggen):

It's important to be clear that this is NOT BASED ON ANY OBJECTIVE DATA. Do not come at me for sources. I made it all up. This is a totally subjective visualization. The "average" developer is hard to pin down and "what they spend time thinking about" is an even more vague idea. But enough developers have looked at this and said it roughly matches their experience that I'm willing to work with it, and think about what it's showing me.

Demands for software are out pacing the supply of developers

The first important point is that our brains aren't getting any larger, but the stack always is. Consumer expectations of web applications have risen relentlessly, so we're always having to think about new things. You could build a web app of 1995 quality in an afternoon today, but nobody would be happy with it.

The next important factor is that as the web has grown to become the dominant platform for all new software, the demand for developers has continued to far out-pace supply. This shows up firstly as the absurd salaries of web developers at every level of the stack in 2020, but also as a constraint on team sizes: companies cannot throw hundreds of web developers at a problem because nobody can afford them.

Simplification is essential

The result of the dual pressures of ever-rising expectations and almost-flat team sizes is a relentless pressure to shrink the stack. We simply cannot think about everything at once, we cannot build everything from scratch. We have to simplify the stack in order to be able to get things done.

The mechanisms by which we simplify the stack are threefold:

1. Standardization

A really great way to simplify things is to choose a default that nearly everyone uses. When everyone's working on the same platform, there are economies of scale that benefit everyone: bugs are fixed faster, there's better tutorials and documentation, new tools will be built on top of that platform.

A default is beneficial to everyone even if the default that wins isn't the best option available. This happened in the 1980s and 1990s with Windows for desktop operating systems. The public didn't sit down and have a meeting to adopt Windows, it just happened. It happened again in the early 2010s with Ubuntu: nobody sat down and had a meeting that said "we're going to run web servers on Ubuntu" but it is an overwhelmingly popular choice today. This same effect is arguably happening now with React at the component level.

With all of these examples, it doesn't really matter whether the default is objectively the best, or even if it's possible to pick a single default that is best for all use-cases. Having a de facto standard allows innovation to happen with the assumption of that platform in place. The simplification is more valuable than the choice itself.

2. Packaging

Another way to simplify things is to take a bunch of things that are often needed at the same time and package them up together so they work together seamlessly. Stripe is a great example of this: Stripe hasn't standardized how payments work globally; there are still a mess of banks and credit card companies and LLCs and fraud detection mechanisms and subscription management to think about. But you can get all of those things from Stripe at the same time, and the result is a radically simplified experience that developers love.

Obviously packaging also manifests at other levels: package managers like my beloved npm aren't doing the packaging themselves, they are providing a mechanism by which millions of authors package up functionality, but packages are overwhelmingly how developers integrate software now.

AWS and the other cloud providers are also primarily in the packaging game. AWS didn't invent virtualization software, it just made it really easy to buy. Every kind of new database or server or processing project that gains traction quickly gets an AWS-hosted version. With a few notable exceptions they're not inventing new tech, they're just selling it very efficiently.

3. Abstraction

The final way we survive the ever growing stack is by abstracting details away. Every software framework you've ever used is in the abstraction game: it takes a general-purpose tool, picks a specific set of common use-cases, and puts up scaffolding and guard rails that make it easier to build those specific use cases by giving you less to do and fewer choices to think about.

The lines between these three are blurry. Popular abstractions become standardizations. Good packaging tends to abstract some details away. But these three lenses are useful for thinking about the state of things.

One thing to note is that every form of simplification comes with a cost. By picking a standard, you save yourself time and gain tooling but lose potential efficiency from a solution more specifically designed for your use case. By picking a packaged solution, you are often paying a literal cost to a vendor. By picking an abstraction, you are almost always losing some amount of resource efficiency by putting an additional layer of code between you and your problem. All simplification involves trade-offs.

Simplification is worth the costs

A lot of complaints from web developers come from the sense that these trade-offs are not worth it, or at least that we are making them without fully considering all our options. Sometimes it's true, but more often the mere fact of not needing to think about the problem at all out-weighs any potential benefits. A new developer could spend years figuring out the perfect configuration of HTTP servers for maximum throughput and efficiency -- or they could ship tomorrow with whatever the default is and never spend any time at all thinking about it. In an ever-growing stack, the ideal solution is one that lets you eliminate a layer entirely from consideration.

Simplification is a self-perpetuating cycle

A side-effect of the push for simplification is that it creates a feedback loop. By making it easier to build our apps, we make it easier for everyone to build apps. This results in the quality of web software constantly rising. This in turn fuels consumer expectations, further increasing the pressure that created the simplification in the first place.

The whole stack is still there

Obviously, the layers in my stack that go away don't really go away. Developers are still working on hardware and networking and operating system, in fact more than ever before. There are people making absurd amounts of money designing new microchips every day. But they are vastly outnumbered by developers further up the stack who are not thinking about those things at all. And, and this is the important part: that's fine.

The stack is too big to learn

You can't learn the whole stack. Nobody can. Maybe it was possible in 1990, the day after the web was invented, but I'm not even sure about that. The stack grows and shifts and evolves every day. We are constantly throwing more money and more processor cycles away by using standards, packages and abstractions, and that's fine too. These costs are usually worth it today, and even if they aren't today they will be six months from now as these things get cheaper and more efficient all the time.

What does this mean for me?

I can see a few lessons to pull out of thinking about the stack in this way.

1. It feels like you're constantly running just to keep up.

You're not imagining that. If you aren't learning new things all the time, you will be constantly drifting down-stack. The things you know will be getting diminished in value: people may settle on a standard that isn't the thing you know. Your area of expertise may be packaged up into a single, easy-to-buy product so nobody will ask you to build it from scratch. The details and subtleties that you provide value by understanding today will be wrapped in an abstraction tomorrow.

2. You can choose to specialize, but be careful.

There are still chip designers, and people who write operating systems, and network stack specialists. If you get really good at an important layer of the stack, you can stay there forever and make a lot of money doing it. But choose wisely: if your speciality gets eliminated by a standard, or an abstraction becomes good enough that nobody needs to iterate on it further, you can find yourself in a shrinking pool of job opportunities.

3. You can constantly run up the stack, but be careful.

The alternative to picking a few layers to settle down in is to constantly move up stack, learning the newest and highest value things. By default this is where most people will end up, because in general there are more people working on the stack the higher up you go. But be careful going too far: it's not always clear what the next big thing is going to be, and you can end up learning a dozen new abstractions that nobody ends up using, or getting locked into a packaged solution that gets overtaken by a competitor.

This is fine

There's a way of looking at this interpretation of the career landscape of a web developer that can seem daunting: you're constantly under pressure to do better, to learn new things, always at risk of being outmoded or made redundant.

But I choose to think about it a different way: web development is a career where I will never be bored. I will never have learned everything there is to know. I will never get stuck using one tool or framework for a decade. And for the same amount of effort, the work I produce this year will always be better than the work I did the year before. Perhaps not more efficient, but more sophisticated and more appreciated by my users. And the inexorable simplification of the stack is what helps me do it.

Further questions

This post is already too long, so I've ended it here, but I have a bunch of further exploration I want to do on this topic:

- Where is the current stack going to go? What are the new layers yet to arrive? Which of the existing layers are destined to vanish?

- Does this model of the stack give a clue as to which tech and which companies in the space will succeed and which will fail? (Spoiler: yes)

- Is there any idea for a new startup or two hidden in here? Maybe.